Data modelling for a large MAT

The wrong way

When I first started developing in BI I worked on single schools and MAT demo systems with tiny numbers of students so my approach to data modelling was chuck data in however it comes then play with relationships until the configuration works.

When I switched to developing for a large MAT (c40k kids in 50 schools) I found out the hard way the limitations of this approach-my measures and reports were unusably slow.

As well as the sheer volume of data I had problems because the MAT wanted a report on pupil demographics, attendance and exclusions with historical accuracy- ie not only seeing data for who's FSM, PP etc today but for any point/period in the past as well.

This divides your pupil contextual factors into two types; unchanging, like ethnicity, EAL & KS2 prior attainment that don't change and so can stay on the students table, and changeable, like Pupil Premium eligibility, that can vary depending on the date range you're looking at.

My original approach was to port over to BI the approach used in the back end of most MIS systems -membership tables listing student IDs and the start and end dates of the eligibility. I had unconnected tables used for slicers so if you selected say FSM: Y on one of them I would check for that value and use it plus the date range to filter the relevant membership table then through that the student tbl. The drawback of this approach is that your measures need to do a lot of cross referencing different tables just to get to the kids you want. If you then have to work out attendance for the selection the whole operation gets painfully slow (30s to 1minute load times).

So how did I address this problem?

I want to walk you through my process because I think that's more useful than just the solution I landed on because whether that will work for you and be a good return on effort invested will depend on the volume of data you've got and what you want to do with it.

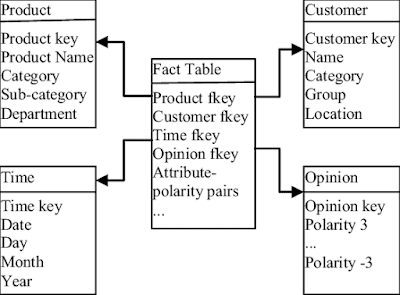

So I started off by googling best practices for data modelling (accepting as always that the examples will be from business and I'll have to work out how they apply to education). The first thing I found out is that principles of relational database design don't always apply to making the most efficient model for BI (this was good cos I'm about to break several of them). There seems to a consensus that the best approach for bi modelling is to organise data into dimension and fact table(s). Dimension tables hold information about things (customers and products being classic examples) fact tables records events (like sales). The fact table lists keys for each dimension so they branch off it like points of a star, which is why this approach is called a star schema.

So for my report I decided to build a fact table with a record for each day (when school is closed) and each AM/PM session (when school is open) for each pupil. A massive table in other words. The fact table records the following for each record:

Date

Enrolment status, Year Group, Tutor Group,

FSM, PP, LAC, SEN status, SEN Need and English Proficiency

ExclusionID, LeaverID (for students/days where exclusion /leaver records exist).

Attendance info (session, mark, minutes late and status (statutory, pre-statutory or post-statutory) plus 1/0 for possible, present, authabs, unauthabs)

The dimension tables that link to this are Date table (record for each date plus academic year, day of week etc)

Student table

Exclusions table

Leavers table

Switch tables (for FSM etc with yes and no that link to the info stored as 1 or 0 on the fact table)

Does it work?

Hell yeah. For what I wanted the fact table was 10x faster, even when using DISTINCTCOUNT(StudentFact[studentid]) to get a count of students, which is often a source of slow queries in DAX. Contextual filters applied directly to the fact table so there was no more complicated date recording and checking in measures.

How do you do it?

More than one way to skin a cat here, I did it in my data warehouse which sits on a Microsoft SQL server. I've found in many areas (notably assessment too) that while you can do calculations in DAX it's better to do as much as possible in the data source. For that reason I wouldn't recommend buying an MIS aggregator product like Bromcom's Vision as for less money (assuming they won't give it away free forever) and a bit of elbow grease you can build your own set up that pulls data from each MIS *and* preps it nicely for BI.

I won't go into a blow by blow of all the queries (feel free to ask on twitter if you're interested) but briefly I use SQL Server Integration Services to scrap then rebuild the fact table joining a calendar source with every date to the enrolment table for a list of dates, student IDs and enrolment statues, then joining that by pupil and date to add the appropriate values from all the contextual factor membership/attendance/leaver/exclusion tables.

What about assessment, behaviour etc?

Again multiple right answers here but my approach has not been to make a massive model with multiple fact tables. Instead, at MAT scale, I think it's better to have dedicated reports. Of course we're interested in attendance and behaviour in the context of attainment, but as the unit of time for assessment data is the term (or maybe half term) then I summarise the attendance and behaviour data to that period for assessment reports.

I've found that Assessment doesn't fit as neatly into a star schema (at least at KS4) because as well as individual assessments I also want a record for each student and assessment point with summarised data like Attainment 8 and EBacc APS. I've found that the speed gain from having these precalculated in the data warehouse more than compensates for the awkwardness of having two fact tables instead of one. In this model (below) it's more like a ladder with a student dimension linked to the fact tables either side and 'Switch' tables (created in DAX using DATATABLE) that allow the user with a single slicer to filter the summarised and the underlying assessment data.

Good Post! Thank you so much for sharing this pretty post

ReplyDeletePower BI Online Training India

Power BI Online Training Hyderabad